How OnlyFans Content Moderation Actually Works: AI, Human Review, and the Gray Areas

OnlyFans moderation is a layered system built on automation, escalation, and policy review. The hard part is not obvious violations, but edge cases.

Platform News & Analysis

Editorial Boundary: This article is editorial analysis, not legal, tax, financial, insurance, privacy, or platform-policy advice. Rules vary by jurisdiction, platform, account status, and business structure. Creators should confirm high-stakes decisions with a qualified professional.

OnlyFans moderation is often described as a black box, but the actual system is easier to understand if you break it into layers. Automated tools scan uploads, account behavior, and reported content. Human reviewers then handle the cases that machines cannot confidently classify. Policy teams define the rules, while trust and safety operations decide how aggressively those rules should be enforced across a global creator base.

That layered approach is standard for any large content platform, but adult-content moderation is harder because the consequences of failure are higher. A missed violation can create legal, financial, and reputational problems fast. Over-enforcement creates creator frustration, account suspensions, and payout delays. OnlyFans has to sit in the middle, which means the moderation system is as much about risk management as it is about content policy.

The First Pass Is Automated

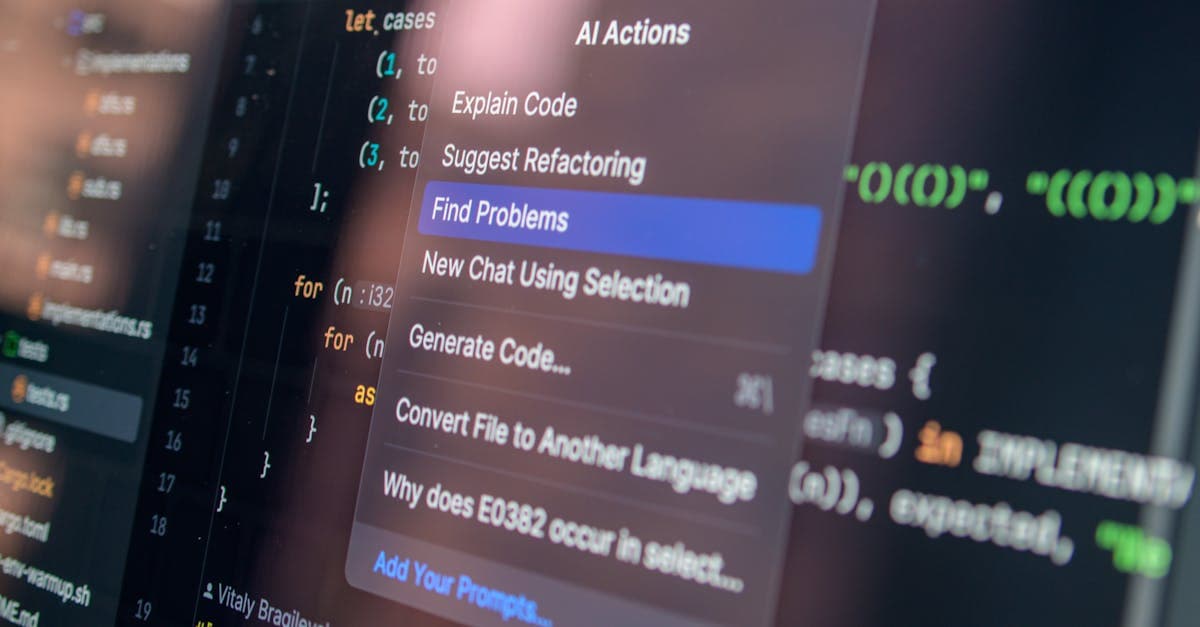

The first stage of moderation is usually machine-based. Images, video, text, and metadata can all be checked for known markers: obvious nudity policy violations, banned objects, underage indicators, spam patterns, duplicate files, and suspicious account behavior. The system is designed to catch the easy cases quickly so human moderators do not drown in volume.

Automation also helps with consistency. A platform handling millions of uploads cannot rely on human memory alone. Hash matching can flag previously removed content, classifiers can score nudity or explicitness, and behavior models can flag accounts that suddenly change patterns. These systems are not perfect, but they are useful because they reduce the number of obviously disallowed uploads that reach the next stage.

The weakness is that automation is best at pattern recognition, not context. A medical image, a lingerie shoot, and a sexually explicit clip can all share visual traits that confuse a classifier. That is why a strong moderation system needs thresholds, escalation rules, and a second layer of human review instead of pretending the model is the final authority.

Human Review Handles The Edge Cases

Once an account or asset is flagged, human reviewers examine the context. They look at captions, account history, prior enforcement, and whether the material fits a category that is allowed under platform policy. On adult platforms, context is everything. A piece of content can be technically explicit and still legal, policy-compliant, and commercially acceptable.

The human layer is where moderation gets expensive. Reviewers must understand policy nuance, regional restrictions, and content context quickly enough to keep turnaround times reasonable. A platform can tolerate a delay of a few hours on a suspicious upload. It cannot afford to leave payouts or account access unresolved for days if it wants creators to trust the system.

This is also where false positives hurt. A creator whose account gets swept into a review queue may lose a day of sales or miss a high-intent traffic spike. That is why moderation teams usually try to distinguish between "hold for review" and "hard enforcement." The former slows things down; the latter can break a creator's business if applied too quickly.

The Gray Areas Are The Real Problem

The obvious violations are easy. The difficult calls involve consent, impersonation, AI generation, age ambiguity, and content that is legal in one context but disallowed in another. A face swap, a synthetic voice track, or a clip that resembles a real person can all trigger policy questions that no simple classifier can answer with confidence.

Age-related enforcement is especially sensitive. Platforms cannot afford to be casual about anyone who appears young, because the financial and legal downside of an error is severe. That means moderation teams often err on the side of caution, which can frustrate creators who believe they are being punished for aesthetic choices rather than policy breaches. In practice, the platform would rather over-review than explain a mistake to regulators later.

Copyright and reposted content create another layer of complexity. Some creators reuse their own clips across multiple accounts or platform ecosystems, while others submit licensed material that looks similar to stolen content. Moderation systems have to separate legitimate cross-posting from infringement, and that is much harder than blocking obvious pirated uploads.

Why The System Still Fails

Even a disciplined moderation stack can fail because the platform scale is large and adversarial. Bad actors learn which upload patterns trigger manual review, which keywords avoid detection, and how to mask objectionable content in ways that delay enforcement. Moderation is never static. It becomes a moving contest between policy enforcement and evasion.

The other failure mode is policy drift. A platform can tighten rules on paper but apply them unevenly in practice because reviewer teams interpret edge cases differently across shifts or regions. That inconsistency makes creators feel like enforcement is arbitrary. It also creates operational noise, because appeals and account disputes pile up when the rulebook is clear but the execution is not.

OnlyFans is in a better position than many platforms because the business is built around verified creators and paid transactions, which creates more accountability. But verification is not the same thing as moderation, and neither one eliminates risk. The system still depends on how quickly the platform can detect problems, route them, and decide what to do next.

Creators should treat moderation history as a business record. Screenshots of notices, timestamps, edited captions, appeal outcomes, and repeated trigger patterns can reveal whether a problem is isolated or systemic. That record also helps teams train assistants and editors. If a certain phrase, prop, or format repeatedly triggers review, the creator can adjust production before a larger release is delayed.

What This Means

The real lesson is that OnlyFans moderation is not a single tool. It is a chain of automated checks, reviewer judgment, and escalation logic built to minimize catastrophic mistakes while keeping the creator business usable. That is why moderation changes often feel invisible until they suddenly matter to a creator's revenue.

What to watch next is the growing role of AI-assisted review and synthetic-content enforcement. As generated media gets cheaper and harder to spot, moderation will become more about provenance, consent, and verification than simple explicit-content filtering. Platforms that handle that shift well will keep creators. Platforms that get it wrong will spend more time in appeals than in growth.

The next operational test is scale under pressure. If creator growth rises while moderation staffing stays flat, the queue expands and confidence falls. If the platform overcorrects with hard enforcement, creators feel punished for edge cases. The right answer sits between those two failure modes, which is why moderation teams end up acting like risk managers rather than pure policy police.

Creators are not asking for no moderation. They are asking for moderation that is legible, fast, and consistent. That sounds simple, but it is exactly the kind of thing platforms struggle to deliver once volume gets large and the content is globally distributed. The more jurisdictions, categories, and account types the platform supports, the harder it is to keep every decision aligned.

That is also why appeal design matters so much. A good moderation system does not just remove bad content. It explains why something was held, gives the creator a path to fix it, and prevents repeat incidents from becoming a permanent business problem. Without that loop, moderation becomes a deterrent to serious creators.

The most likely future is a hybrid system with more automated scoring, more provenance signals, and more targeted human review. That will not eliminate false positives, but it should reduce the amount of wasted reviewer time. The platforms that get that balance right will end up with better creator loyalty and lower risk.

In other words, moderation is not just a compliance function. It is part of the product. Creators feel it every time they upload, appeal, or wait for a payout.

That is why moderation quality becomes visible in business behavior even when the platform does not advertise it. Creators build around systems they trust. When they feel the process is legible, they upload more confidently and commit more of their traffic to the platform.

The practical lesson is that moderation is not a side function attached to the business. It is one of the mechanisms that decides whether the business can keep scaling without turning into a support queue.

The platforms that get this right are usually the ones that treat moderation as product design. They write clearer rules, give creators better notices, and keep the review cycle short enough that people can still run their businesses. That is operational work, but it has direct revenue consequences.

The platforms that get it wrong create a different kind of friction. Creators spend more time second-guessing uploads than producing them, and that reduces activity even when no formal ban occurs. The moderation system then becomes the bottleneck instead of the protection layer.

That is why this topic keeps showing up in platform strategy conversations. A good moderation stack is not just safer. It is part of what lets the business feel usable at scale.

As the creator base gets larger and the content gets more synthetic, the moderation stack has to become more disciplined, not less. That makes trust and enforcement part of the core operating model.

Get the pulse, weekly.

Platform news, creator economy trends, and industry analysis — delivered every Friday.